As you know, VMware has released a few weeks ago vSphere 7.0. Therefore, it was for me an almost mandatory step to upgrade my serious Home Lab from 6.7 U3 to 7.0. In this blog posting I want to describe the necessary steps, and with which problems I had to deal.

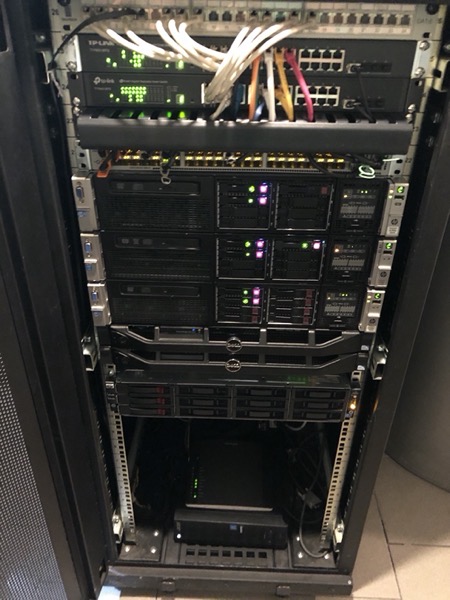

My Home Lab consists of a Compute Cluster with 3 HP DL380 G8 Servers (which are only occasionally powered on), and a Management Cluster with 2 old Dell R210 II Servers, which are always up and running. Let’s have a look now on how I updated both clusters.

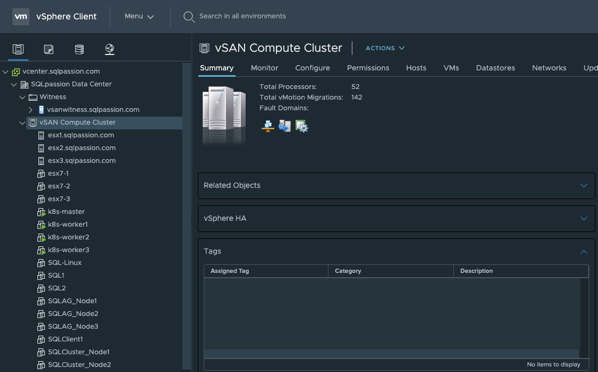

Compute Cluster

Prior the update of the individual ESXi Hosts, I have upgraded the VCSA Appliance to 7.0. This was a quite straightforward process, which I have already described in a previous blog posting. After the update of the VCSA Appliance was completed successfully, I started with the upgrade of my Compute Cluster.

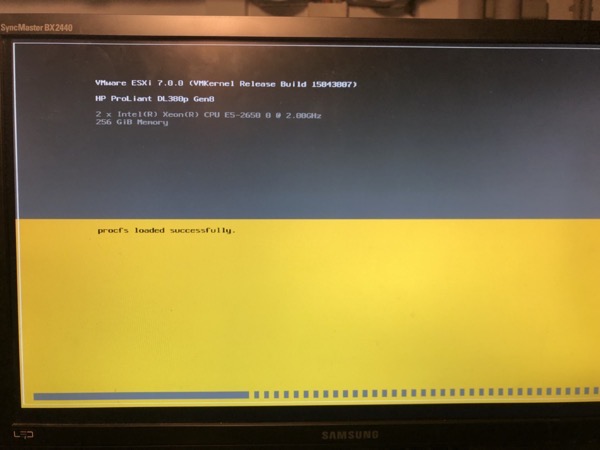

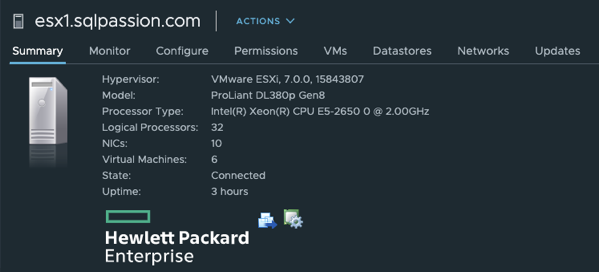

The Compute Cluster is the beef of my Home Lab and consists of 3 HP DL380 G8 Servers with two 8-Core CPU sockets and 256 GB of RAM in each server. In addition I’m using an Intel XL710 network card in each server, which has four 10GBit (used for vSAN traffic) and two 40GBit ports (used for vMotion traffic).

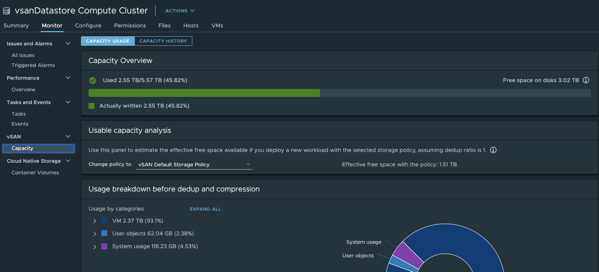

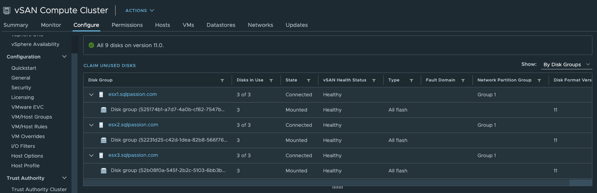

From a storage perspective I’m running on VMware vSAN, where I use the Intel Optane 900P device for the Caching Tier, and multiple Samsung 850 Pro SSDs for the Capacity Tier. My vSAN Datastore in the Compute Cluster has currently a raw capacity of 5.5 TB.

The upgrade of the 3 ESXi Hosts was a quite straightforward process and was completed in about an hour with all the necessary reboots.

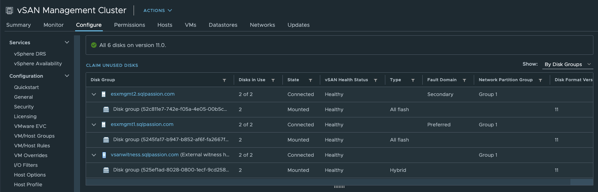

After the successful upgrades of the ESXi Hosts, I also upgraded the used Distributed Switch to version 7.0, and also migrated the vSAN Datastore to version 11.0.

As a summary, I can say that I had really now problems upgrading the HP DL380 G8 Servers to vSphere 7.0 – everything worked as expected.

Management Cluster

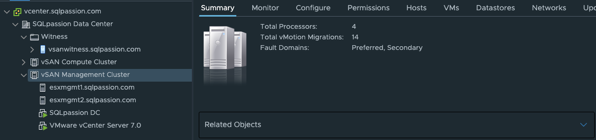

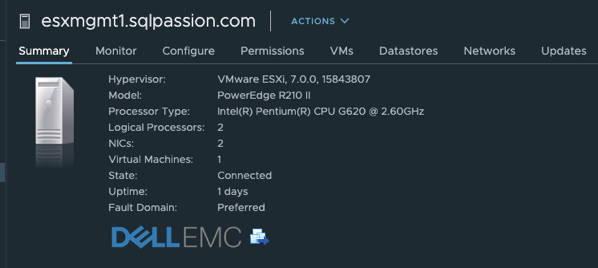

After the successful upgrade of the Compute Cluster, I also wanted to upgrade my Management Cluster, which consists of 2 Dell R210 II Servers, which provide me a 2-node vSAN Datastore. To provide here a fault-tolerance I run the necessary vSAN Witness in VMware Workstation Pro 15 on a lower-end Windows 10 based machine.

Because the Dell R210 II Servers are already quite old, I was somehow scared about the upgrade to vSphere 7.0, and if everything works as expected. My Management Cluster is not that powerful, and it only hosts 2 Virtual Machines: the VCSA Appliance, and my Domain Controller, which provides me DNS, VPN, and other services. So, it’s a quite important VM to me.

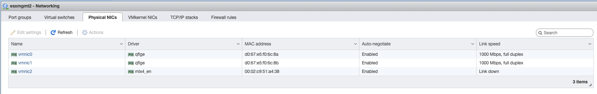

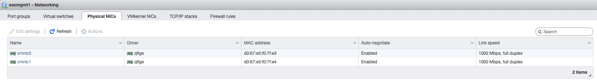

Interestingly enough, I didn’t had any problems during the upgrade. I just got 2 warnings about the very soon unsupported CPUs (I’m aware of this), and that a PCI device is NOT supported anymore. Unfortunately, this PCI device is the 10 GBit network card that I use in both Dell Servers for the vMotion/vSAN traffic. As you can see in the following 2 pictures, vmnic2 is just gone after the upgrade to 7.0.

Fortunately, the Management network is still working. And therefore, I’m currently just routing the vMotion/vSAN network traffic through the Management network – with the speed of 1 GBit… If someone of you has an idea how to get my network card working again in 7.0, please leave me a comment .

Besides the network card problem, everything else worked as expected, and even my Dell R210 II Serves are running successfully vSphere 7.0.

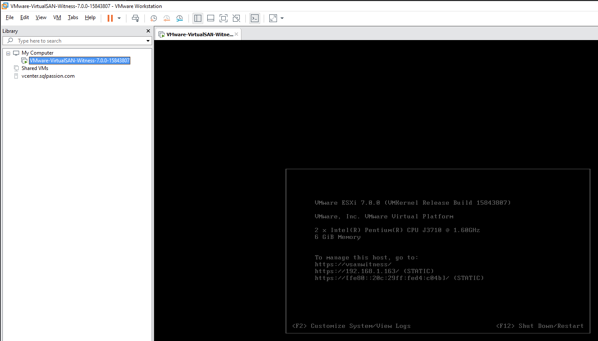

Besides the upgrade of both ESXi Hosts in the Management Cluster, I also had to update the vSAN Witness Appliance, which provides me a fault tolerance in my 2-node vSAN deployment. As I have said previously, I run the Witness Appliance within VMware Workstation Pro 15.

After the upgrade of the Witness Appliance, I was also able to migrate the vSAN Datastore to version 11.0.

Summary

By now my whole Home Lab setup is now successfully migrated to vSphere 7.0. One of my next (big) steps it to deploy NSX-T 3.0 to be able to work and learn about the Kubernetes Integration in vSphere 7.0. Stay tuned…

Thanks for your time,

-Klaus

6 thoughts on “My Home Lab Upgrade to vSphere 7.0”

I’ve ran into issues with VMware not accepting older network cards as well. However, they were different network cards, but the process might be the same.

Below are two links from someone else’s site that I’ve used without a problem.

https://vmexplorer.com/2018/06/08/home-lab-gen-iv-part-v-installing-mellanox-hcas-with-esxi-6-5/

https://vmexplorer.com/2017/09/23/create-iso-with-esxi-6-0u2-and-realtek-8168-drivers/

Klaus my man,

this is very good and elaborate setup however, I can only imagine how much your electric bills are a month.

Hello Shawn,

The power costs are not that high, because the HP servers are not always up and running. Only when I need them.

Thanks,

-Klaus

how much does it cost your lab, nice to have for testing. do you have a complete list of both hardware and software.

This blog posting tells you more about my used gear: https://www.sqlpassion.at/archive/2018/01/15/how-i-designed-my-vmware-vsan-based-home-lab/

Would you please check and share if you see any sensors under monitoring?

I have installed VMware ESXi 7.0.2-17867351-HPE-702.0.0.10.7.0.52 on DL580 Gen8 but under Host -> Monitor -> Hardware -> System sensors , I see “No sensor data available”.

How you did fix the Gen8 sensors provided by SMX on ESXi 7?