Years ago when I switched from Windows to Mac, people have told me regularily that I’m crazy. How can I be that stupid to work on MacOS when I’m dependent on SQL Server? In my case it wasn’t that terrible, because my main work is about content creation (writing blog postings, articles, presentations, training videos) and very often I was only connecting through a RDP connection to a remote SQL Server. Therefore running natively on MacOS was not a big deal for me, and for the last resort I always have a Windows VM which runs in VMware Fusion on my Mac.

But since the introduction of the Container concept through Docker and the possibility to run SQL Server directly in a Container, my life was changing even better. Because now I can run SQL Server 2017+ directly on my Mac and I even don’t really need a Windows VM anymore. In this blog posting I want to show you how you can do the same and run SQL Server directly on your Mac in a Docker container.

Installing SQL Server in a Docker Container

Before you can install SQL Server in a Docker Container on the Mac, you have to install and configure of course Docker itself. I don’t want to go into the details how to install Docker itself, because the necessary steps are very well documented.

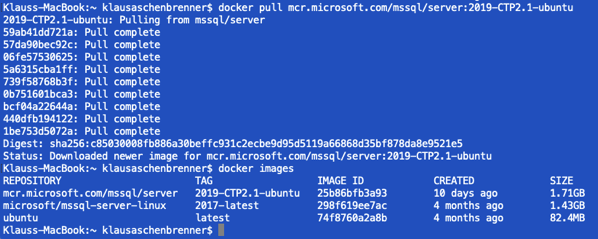

Before you can create a Docker Container for SQL Server, you have to pull the correct Docker Image from the Docker Registry. In my case I have decided to try out the latest CTP version of SQL Server 2019:

docker pull mcr.microsoft.com/mssql/server:2019-CTP2.1-ubuntu

When you have pulled the image, you can see it with the docker images command in your Terminal:

You can think about a Docker Image like an ISO file: it’s just an image, and you can’t run it directly, because you have to install it. Therefore we also have to “install” the pulled Docker Image. In Docker you can “install” an image by running it. And that creates the actual Docker Container, which is finally the exectuable that you are executing. Let’s run our Docker Image with the docker run command:

docker run -e ‘ACCEPT_EULA=Y’ -e ‘SA_PASSWORD=passw0rd1!’ -p 1433:1433 –name sql2019_ctp2 -d mcr.microsoft.com/mssql/server:vNext-CTP2.0-ubuntu

As you can see from the command line, you have to pass in a lot of different parameters. Let’s have a more detailed look on them:

- -e ‘ACCEPT_EULA=Y’

- With the -e option you set an environment variable, on which SQL Server is dependent on. In our case we have to accept the EULA to be able to use SQL Server.

- -e ‘SA_PASSWORD=passw0rd1!‘

- With the SA_PASSWORD environment variable we set the password for the SA login.

- -p 1433:1433

- With the -p option we bind a port on our host machine (in my case on the Mac) to a port in the Container. The port on the left side of the colon is the port on the host machine, and the port on the right side of the colon is the port in the Container. In my case I bind the default SQL Server port of 1433 within the Container to the port 1433 on my Mac.

- Therefore I can directly access the exposed SQL Server Container through the IP address of my Mac on the network. If you have multiple SQL Server Containers, you can also bind them to different ports on your host machine to access them independently from each other.

- –name

- With the –name option we assign a custom name to our Docker Container.

- -d

- And with the -d option we specify the Docker Image that we have pulled previously, and that you want to run the Docker Container detached from the Terminal. This just means that you can close your Terminal, and your Docker Container is still running in the background.

After you have executed that Docker command, your Docker Container is up and running.

Accessing SQL Server on a Mac

We have now 2019 up and running in a Docker Container. But how do we access SQL Server? Of course, I can start up a Windows VM, and use SQL Server Management Studio to access SQL Server. But then I’m again dependent on a Windows VM, which also needs periodically updates, and it would be also a huge overhead to deploy a whole Windows VM just for SQL Server Management Studio…

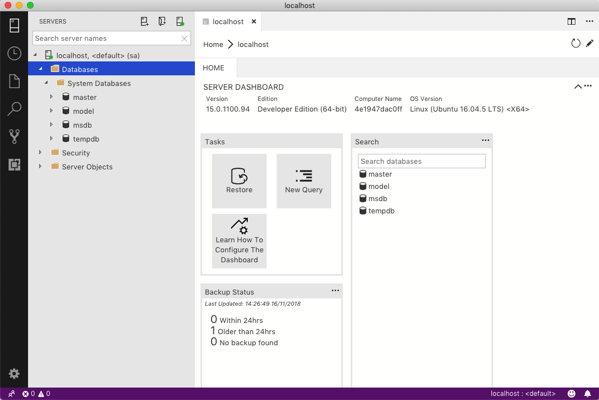

Therefore let’s introduce Azure Data Studio! Azure Data Studio was formerly known as SQL Operations Studio and it is a client application with which you can manage SQL Server – natively on Windows, Linux, and Mac!!!

As you can see from the previous picture, I have connected here directly to localhost, because in the last step we have exposed the port 1433 of the Docker Container to our host machine. Don’t get me wrong: compared to SQL Server Management Studio, Azure Data Studio is “nice” but… 😉

But hey, I can run it directly on my Mac (without the need of a Windows VM), I can run SQL statements, I have access to Estimated and Actual Execution Plans, and very importantly – it’s extensible. What do I need more? For the kind of work that I’m doing, it’s enough.

Restoring your first Database

When you look back to the previous picture, you can see that you got a vanilla installation of SQL Server 2019. There are our system databases, the crazy default settings, and that’s it. There are of course currently no other database. So you have to create your own databases, or you take an existing database (maybe from a Windows-based SQL Server installation) and you restore it in your Docker Container. Let’s do that now.

In my case I want to show you now the necessary steps how to restore AdventureWorks in the Docker Container. First of all you have to copy your backup file into the Docker Container. But you can’t do a regular cp command from the Terminal, because that command has no idea about your Docker Container. Makes somehow sense…

Therefore your Docker installation offers you the command cp with which you can copy a local file into a Docker Container and vice versa. Let’s take now our backup of AdventureWorks and copy it into the folder /var/backups of our Docker Container:

docker cp AdventureWorks2014.bak sql2019_ctp2:/var/backups/AdventureWorks2014.bak

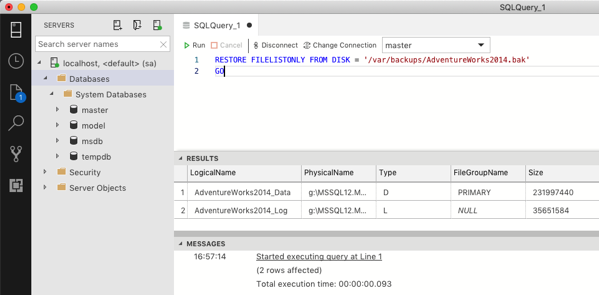

After you have copied the backup file, we can now restore the database. But the destination folders are different as on a Windows-based SQL Server installation, therefore we also have to move our data and log files. Therefore I have executed in the first step the following command to get the logical file names of our database backup.

RESTORE FILELISTONLY FROM DISK = ‘/var/backups/AdventureWorks2014.bak’

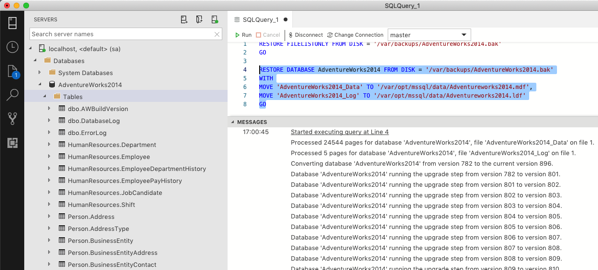

And based on that information, let’s perform now the restore of our database.

RESTORE DATABASE AdventureWorks2014 FROM DISK = ‘/var/backups/AdventureWorks2014.bak’

WITH

MOVE ‘AdventureWorks2014_Data’ TO ‘/var/opt/mssql/data/Adventureworks2014.mdf’,

MOVE ‘AdventureWorks2014_Log’ TO ‘/var/opt/mssql/data/Adventureworks2014.ldf’

As you can see I’m moving the data and log files into the folder /var/opt/mssql/data. And now we have our AdventureWorks database restored in our Docker Container.

When you are finished with your work in your Docker Container, you can stop the Container with the following command:

docker stop sql2019_ctp2

And with a docker start command, you can restart your Container again:

docker start sql2019_ctp2

In that case, all the changes that you have done in your Docker Container (like restoring the AdventureWorks database), are persisted across restarts.

Summary

Running SQL Server natively on a Mac or on Linux was always a huge April fool. But with the introduction of Docker, and the SQL Server support for it, it’s now real. You can now run natively SQL Server on the Mac, and with the help of Azure Data Studio you can even access SQL Server with a native MacOS application. We have really exiting times ahead of us!

Thanks for your time,

-Klaus

3 thoughts on “Running SQL Server with Docker on the Mac”

Hi Klaus, I think there’s a copy&paste error for the docker run command. This should do the trick:

docker run -e ‘ACCEPT_EULA=Y’ -e ‘SA_PASSWORD=passw0rd1!’ -p 1433:1433 –name sql2019 -d mcr.microsoft.com/mssql/server:2019-CTP2.1-ubuntu

I am having issue connecting to the SQL Container after running

docker pull microsoft/mssql-server-linux

docker run -d –name sql_server_local -e ‘ACCEPT_EULA=Y’ -e ‘SA_PASSWORD=pwd123’ -p 1433:1433 -d microsoft/mssql-server-linux

When I run `docker ps` it does show the active running container, when I try to access it from datagrip or azuredatastudio using hostname:localhost, user:sa, password:[given], port:1433 its not connecting and immeiately the running container goes down.

Any idea why this is happening this way? How should I access this container service in my application

Thanks for this guide. I tried running the SQL Server on Azure Data Studio and I don’t seem to know what the Username field is. Please assist.