Today I want to talk about a very important topic in SQL Server – setting a Processor Affinity – and the unwanted side effects that you introduce with this approach. First of all I want to talk a little bit about what a Processor Affinity is, and how it relates to SQL Server.

What is a Processor Affinity

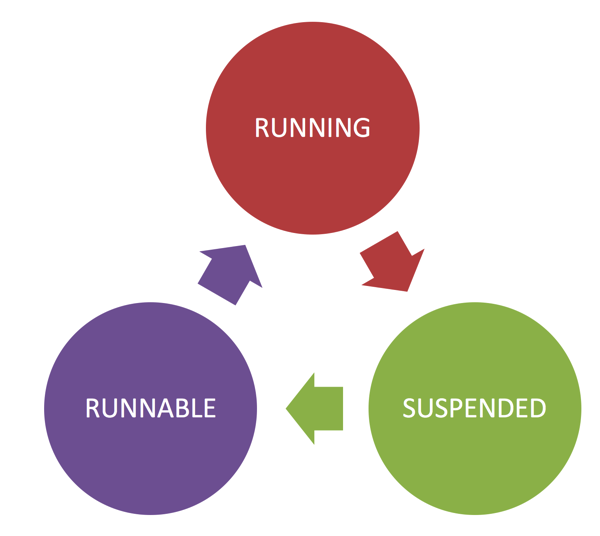

If you run SQL Server with a default configuration, SQL Server will schedule your queries on all available CPU cores. For each CPU core you get a so-called Scheduler, which implements a Query State Machine with the states RUNNING, SUSPENDED, RUNNABLE.

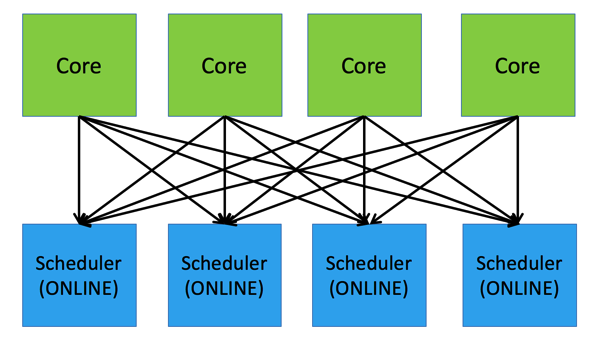

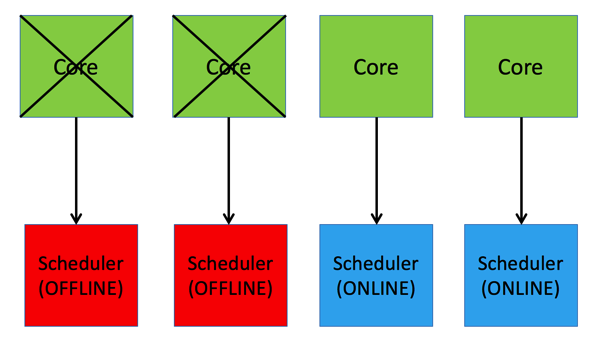

This Query State Machine is very important, because it is also tracked by the Wait Statistics, which give you a great overview why SQL Server is slow. The most important point is now that a Scheduler is NOT bound to a specific CPU core. Each Scheduler can run on every CPU core. The following picture illustrates this very important concept.

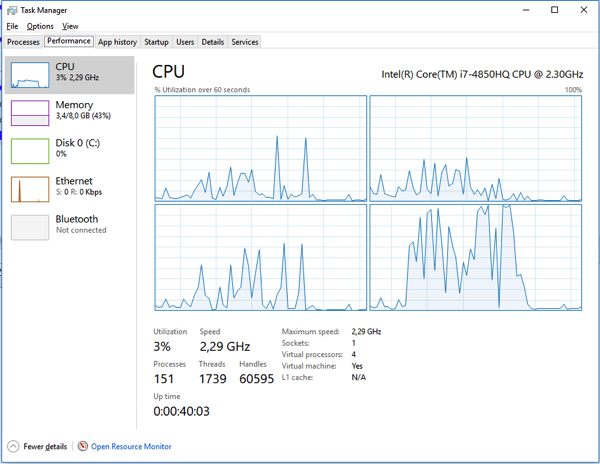

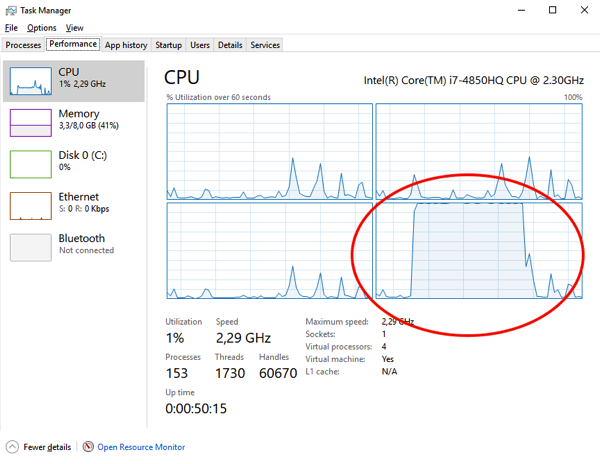

Therefore, when an incoming query is assigned to a Scheduler, the Scheduler can be run on each CPU core. There is no 1:1 mapping between a Scheduler and a CPU core. The following picture shows you the CPU utilization during the execution of a Single-Threaded query which is mostly CPU bound.

As you can see all 4 cores have some CPU utilization, but there is no CPU core that runs continuously at 100%. With the following query you can also check on which CPU cores a specific query (based on the SPID) can be executed.

SELECT r.session_id, t.affinity FROM sys.dm_exec_requests r JOIN sys.dm_os_workers w ON w.task_address = r.task_address JOIN sys.dm_os_threads t ON t.worker_address = w.worker_address WHERE r.session_id = 53

With the default configuration of SQL Server, and a 4 core system, you will get back the value 15 – which is in binary 1111. The binary value 1111 just means that the Scheduler of this query can run on each core of our 4 core system. With this example you can see quite easily how a Scheduler moves around between the individual CPU cores.

Setting a Processor Affinity

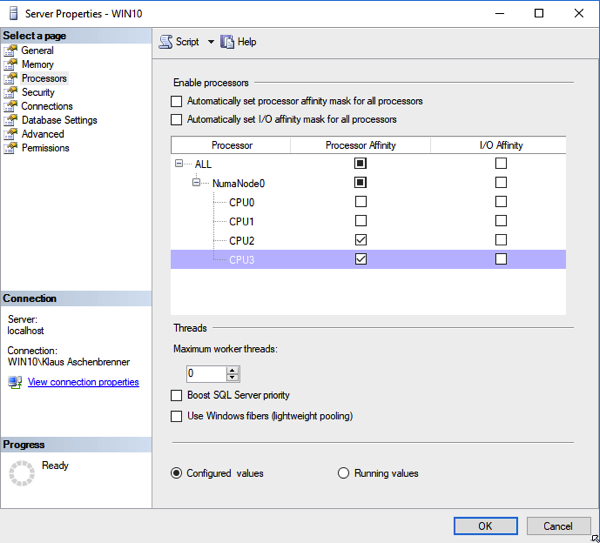

Imagine now you want to restrict which specific CPU cores your SQL Server instance should use. In that case you can reconfigure your SQL Server Instance and tell SQL Server to use only a subset of CPU cores.

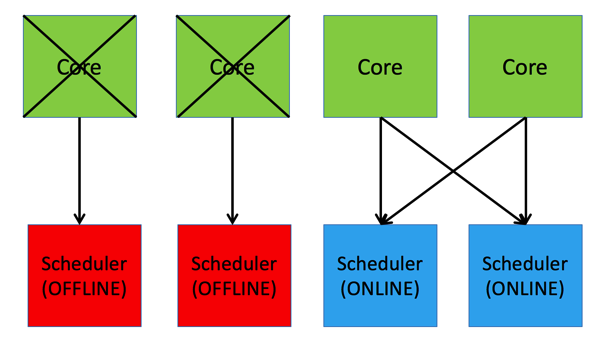

In this configuration you are telling SQL Server to use only the CPU cores 2 and 3, and the cores 0 and 1 should not be used by SQL Server. Such a configuration is done very often in combination with multiple SQL Server instances on the same machine. When you perform that configuration, SQL Server will first take the Schedulers 0 and 1 offline.

But in addition you are also telling SQL Server that there is now an Affinity between a Scheduler and a CPU core. As you can see from the previous picture, there is now a Scheduler Affinity set:

- Scheduler 2 can now only run on CPU core 2

- Scheduler 3 can now only run on CPU core 3

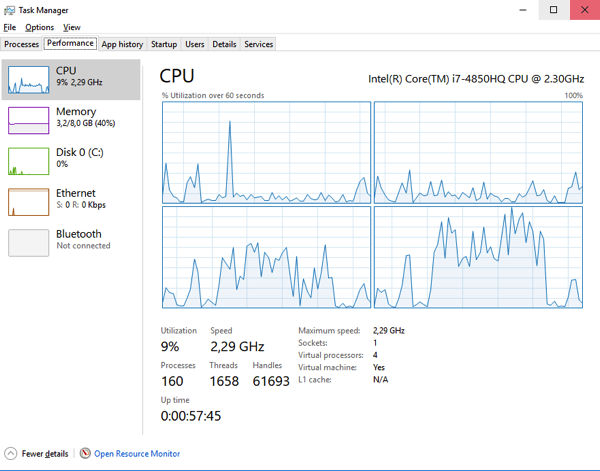

This means now that a Scheduler is directly bound to a CPU core, and that the Scheduler can’t decide on a CPU core anymore. You can also verify this behaviour through Task Manager. When you are running a query again, you can see now that core 3 or 4 will go up to 100% constantly:

If in that case the core is blocked, your SQL Server query will be also blocked. I’m always demonstrating this behaviour by using a simple .NET Application, which is bound to a specific CPU core (in our case core 2 or 3), and just burns down CPU cycles in a simple endless loop.

Setting a Processor Affinity in the correct way

Summary

By default SQL Server doesn’t have a Processor Affinity, which is a good thing. But as soon as you restrict SQL Server to use a subset of CPU cores, you will also have internally a Processor Affinity set. And therefore it’s very important that you know these unwanted side effects when you working on your SQL Server instance configuration, and how to avoid it with Trace Flag 8002.

Thanks for your time,

-Klaus

9 thoughts on “Setting a Processor Affinity in SQL Server – the (unwanted) Side-Effects”

Great article Klaus!

Please blog more on SQLOS…

No need for that – checkout http://www.sqlonice.com 🙂

Really interesting stuff!

Cool article! Thx!

Hi Klaus,

Is there any dmv (sys.schedulers?) where I can see the current binding of the scheduler to a CPU like a snapshot of the current state?

The view should tell me scheduler1 is currently on CPU1, next time I will execute the dmv it will tell me scheduler1 is now on CPU3…

Thanks!

Hello Torsten,

Check sys.dm_os_schedulers and the column cpu_id: https://docs.microsoft.com/en-us/sql/relational-databases/system-dynamic-management-views/sys-dm-os-schedulers-transact-sql

Thanks,

-Klaus

Please here what wait it will elaborate–sos_schdeuler_yield.

No, because the wait will occur in the Windows OS, and not within SQL Server.

Hi Klaus,

Great article! I do have a situation in which it is unclear to me if I should set any affinity mask or not.

I have a physical server with this processor configuration: SQL Server detected 2 sockets with 18 cores per socket and 36 logical processors per socket, 72 total logical processors; using 48 logical processors based on SQL Server licensing.

I have SQL Server Standard 2016 installed, SP2 CU10, hence I also get soft-NUMA enabled by default and used since I have more than 8 cores in one node. From the log I can see this “Automatic soft-NUMA was enabled because SQL Server has detected hardware NUMA nodes with greater than 8 physical cores.”.

In this situation, what would be the best way to go, since I cannot change the physical setup of the server. Should I use affinity masking to limit the amount of cores available to SQL Server due to licensing? SQL does it already, but then how do I make sure that the server is performing at its best, taking MAXDOP into account also. And what should be the value for MAXDOP is this case? 6, 8, 9?

Your input would be greatly appreciated!

Thanks a lot!