ColumnStore Indexes are great! They are a real performance booster for Data Warehousing and Reporting workloads. In combination with Clustered ColumnStore Indexes you get a huge compression benefit over regular RowStore Indexes (Clustered Indexes, Non-Clustered Indexes). And creating a Clustered ColumnStore Index is quite easy:

CREATE CLUSTERED COLUMNSTORE INDEX ccsi ON TableName GO

But is that all that you have to know about Clustered ColumnStore Indexes? Not really, as you will see in this blog posting…

What are ColumnStore Segments?

I always kid during my various conference talks and public training sessions and say that you don’t have to know anything more about Indexing as soon as you get started with Clustered ColumnStore Indexes in SQL Server. There are so many advantages with Clustered ColumnStore Indexes, which lead to massive performance improvements:

- Better Compression

- Batch Mode Execution

- Less IO & better Memory Management

- Segment Elimination

Creating a Clustered ColumnStore Index in SQL Server is quite easy as you see from the following example:

CREATE CLUSTERED COLUMNSTORE INDEX idx_ci ON FactOnlineSales GO

You only need to specify the table name, nothing more. You even don’t need to worry about Clustered Key Columns, because this concept doesn’t apply to ColumnStore Indexes. Easy, isn’t it? Let’s run a simple query with that Clustered ColumnStore Index in place:

-- Segment Elimination doesn't work quite well, because -- we have a lot of overlapping Segments. SELECT DateKey, SUM(SalesAmount) FROM FactOnlineSales_Temp WHERE DateKey >= '20090101' AND DateKey <= '20090131' GROUP BY DateKey GO

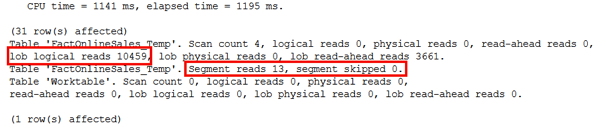

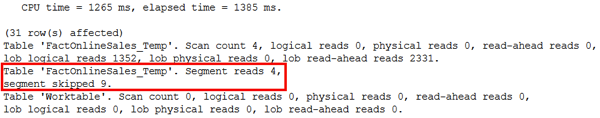

The query is quite fast, because SQL Server can use the Clustered ColumnStore Index for query execution. And the output from STATISTICS IO also shows you that not many LOB Logical Reads were needed for accessing the Clustered ColumnStore Index:

But what about these Segment Read and Segment Skipped metrics?

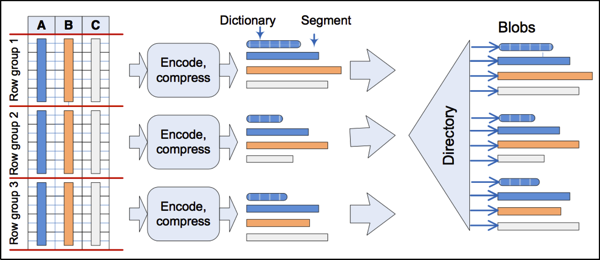

As you might know a ColumnStore Index is internally subdivided into so-called ColumnStore Segments. A ColumnStore Segment is always specific to a specific column and a Row Group. A Row Group contains about 1 millions of rows. The following picture illustrates this very important concept:

Source: http://research.microsoft.com/apps/pubs/default.aspx?id=193599

What is ColumnStore Segment Elimination?

The most important thing here is that SQL Server stores a Minimum and Maximum Value internally for each ColumnStore Segment. And based on these values SQL Server can perform so-called Segment Elimination. Segment Elimination means that SQL Server only reads those Segments (while accessing a ColumnStore Index) that contain requested data. You can think about it in the same way as Partition Elimination when you work with Partitioned Tables. But the elimination happens here at the ColumnStore Segment Level.

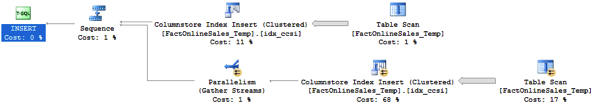

As you have seen in the previous picture, SQL Server wasn’t able to eliminate any segments during the ColumnStore Index access, because by default you have no sorting order in the ColumnStore Index. The sorting order of your data depends on how SQL Server reads the data in the Execution Plan when you create the ColumnStore Index:

As you can see the Clustered ColumnStore Index was created by reading from the Heap Table that initially contained the data. And therefore you have no sorting order in the Clustered ColumnStore Index, and therefore the Segment Elimination can’t work perfectly for you.

How can you improve that situation? Enforce a sorting order in your data by first creating a traditional RowStore Clustered Index, and change it to a Clustered ColumnStore Index! Ouch that hurts...

-- Now we create a traditional RowStore Clustered Index to sort our -- table data by the column "DateKey". CREATE CLUSTERED INDEX idx_ci ON FactOnlineSales_Temp(DateKey) GO -- "Swap" the Clustered Index through a Clustered ColumnStore Index CREATE CLUSTERED COLUMNSTORE INDEX idx_ci ON FactOnlineSales_Temp WITH (DROP_EXISTING = ON) GO

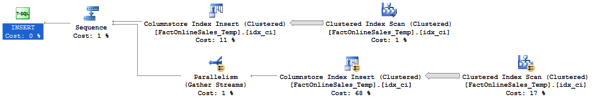

With a traditional Clustered RowStore Index in place, the Query Optimizer will reference this index in the Execution Plan when you build the Clustered ColumnStore Index:

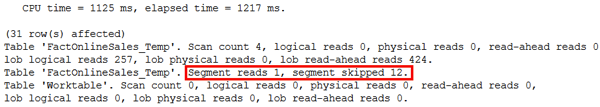

And as a side-effect you should have now sorted data in the Clustered ColumnStore Index, and Segment Elimination should work quite well:

-- Segment Elimination works better than previously, but still not perfectly. SELECT DateKey, SUM(SalesAmount) FROM FactOnlineSales_Temp WHERE DateKey >= '20090101' AND DateKey <= '20090131' GROUP BY DateKey GO

But when you look again at the output of STATISTICS IO, SQL Server still has to read a lot of segments, and only skips a few of them:

But why can’t SQL Server skip all segments other than the one we are interested in? The problem lies in the creation of the Clustered ColumnStore Index. When you look back to the previous Execution Plan, you can see that the ColumnStore Index Insert (Clustered) operator was running in parallel – across multiple worker threads. And these worker threads are again destroying the order of your data in the Clustered ColumnStore Index! You read your data presorted from the Clustered RowStore Index, and then the parallel creation of the Clustered ColumnStore Index reshuffles your data... That hurts - again!

You can only solve that problem by creating the Clustered ColumnStore Index with a MAXDOP of 1:

CREATE CLUSTERED COLUMNSTORE INDEX idx_ci ON FactOnlineSales_Temp WITH (DROP_EXISTING = ON, MAXDOP = 1) GO

This sounds terrible, and it is terrible!!! But it’s the only solution to let you preserve the ordering of your data in the ColumnStore Index. When you later read from the Clustered ColumnStore Index, you will then see that SQL Server was finally able to skip all Segments other than the one you are interested in:

Summary

ClusteredColumnStore Indexes are great – really great. But by default the Segment Elimination can’t be performed very well, because you have no predefined sorting order in your Clustered ColumnStore. Therefore you should always make sure that the Segment Elimination works well, when you tune your ColumnStore queries. And sometimes you even have to work against SQL Server by using a MAXDOP 1 to preserve the ordering of your data...

Thanks for your time,

-Klaus