I have already blogged a few times about In-Memory OLTP and why I don’t yet recommend this technology currently to my customers. Today I want to talk a little bit more about the memory requirements behind In-Memory OLTP, and what happens if you have too little memory.

It’s all about Memory!

We all know that a long time ago a famous person said that 640K of memory ought to be enough for anyone. He was so wrong! The memory requirements for In-Memory OLTP are quite high:

- Every Hash Bucket of a Hash Index consists of a 64-bit long pointer

- Every time you change a record, a new version is written that is stored in memory

Microsoft make the recommendation that the memory should be at least 2 times the size of your Memory-Optimized Table. This double amount of space is needed to make room for possible row versions when you change a record.

A few weeks ago, a customer of mine had a very interesting question: what happens when you don’t have enough memory and In-Memory OLTP can’t rebuild the Hash Indexes during the startup of the database? This sounds like a quite simple question, but it is very important to know how In-Memory OLTP reacts in this specific scenario.

Imagine you are running In-Memory OLTP within a virtual machine and at some point in time your VMware admin gives your virtual machine less memory than previously. I have already seen this quite often in combination with Virtualization.

Let’s try to crash In-Memory OLTP!

Let’s try to engineer such a scenario. In the first step I want to show you what happens when you create a Memory Optimized Table, where you don’t have enough physical RAM available. The following listing creates a new Memory Optimized table with 4 Hash Indexes, where every Hash Index consists of 250 million Hash Buckets. Therefore the memory requirement for the complete table is around 7.4 GB of RAM, but I’m running my virtual machine with only 8 GB of RAM!

-- 250 000 000 x 4 = 1 000 000 000 Hash Buckets of 8 bytes: 8 000 000 000 = 7.4 GB of memory overhead. -- The following query will fail, because there is too less memory available. CREATE TABLE Foo ( Col1 INT NOT NULL PRIMARY KEY NONCLUSTERED HASH WITH (BUCKET_COUNT = 250000000), Col2 INT NOT NULL INDEX idx_Col2 NONCLUSTERED HASH WITH (BUCKET_COUNT = 250000000), Col3 INT NOT NULL INDEX idx_Col3 NONCLUSTERED HASH WITH (BUCKET_COUNT = 250000000), Col4 INT NOT NULL INDEX idx_Col4 NONCLUSTERED HASH WITH (BUCKET_COUNT = 250000000) ) WITH ( MEMORY_OPTIMIZED = ON, DURABILITY = SCHEMA_AND_DATA ) GO

After a few seconds the CREATE TABLE statement fails and In-Memory OLTP gives you a nice error message that you have too less memory available:

Msg 701, Level 17, State 137, Line 43 There is insufficient system memory in resource pool ‘default’ to run this query.

So far so good. Let’s redesign the Table that it only needs 3.7 GB of RAM:

-- 250 000 000 x 2 = 500 000 000 Hash Buckets of 8 bytes: 4 000 000 000 = 3.7 GB of memory overhead. -- The following query will fail, because there is too less memory available. CREATE TABLE Foo ( Col1 INT NOT NULL PRIMARY KEY NONCLUSTERED HASH WITH (BUCKET_COUNT = 250000000), Col2 INT NOT NULL INDEX idx_Col2 NONCLUSTERED HASH WITH (BUCKET_COUNT = 250000000) ) WITH ( MEMORY_OPTIMIZED = ON, DURABILITY = SCHEMA_AND_DATA ) GO

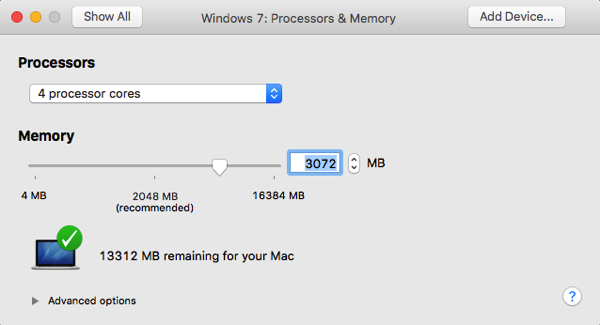

This time the creation of the table succeeds as I have enough RAM available. But now I get nasty, and I shut down my Virtual Machine. And I reconfigure it to have only 3 GB of RAM:

When we now restart the Virtual Machine and SQL Server, what do you think happens? Do you think that SQL Server can bring our database online? Or do you think that only the PRIMARY file group comes online and the In-Memory file group stays off-line? Let’s try it!

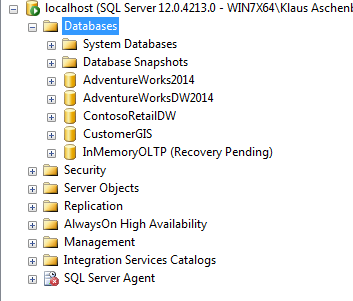

When you look in Object Explorer in SQL Server Management Studio after the restart, you can see that our *whole* database is in the Recovery Pending state!

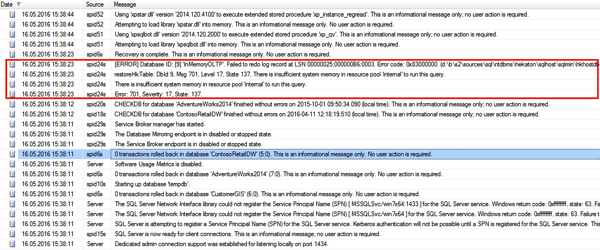

That’s really, really terrible, because you can no longer access any of your database! Even your traditional Disk Based Tables are no longer accessible! When you look into the SQL Server Log, you can also see that SQL Server gives you an error message that you have too less memory available:

Ouch, we have broken In-Memory OLTP in some way…

Summary

I know that the engineered scenario is a very rare case, but as I have said, I have already seen many situations when VMware admins just take RAM away from Virtual Machines. In combination with In-Memory OLTP it could mean that your complete database isn’t accessible anymore!

Please keep this in mind when you deploy databases based on In-Memory OLTP. You always have to think very carefully about your memory requirements, and you also have to project the future memory requirements accordingly.

Thanks for your time,

-Klaus

4 thoughts on “In-Memory OLTP and too less Memory”

I am just wondering how does such technologies work from other vendors?

Hello Sir,

Thanks for your comment.

I can’t comment on technologies from other vendors…

Thanks,

-Klaus

What if the memory is again increased then can we bring back the database online from recovery state?

Hello Aravindh,

Yes, the database is then online again.

Thanks,

-Klaus