In last weeks blog posting I have talked about Latches in SQL Server. At the end I have also introduced a little bit the idea of spinlocks to you. Based on that foundation I will continue today the discussion about spinlocks in SQL Server, and will show you how you can troubleshoot them.

Why do we need Spinlocks?

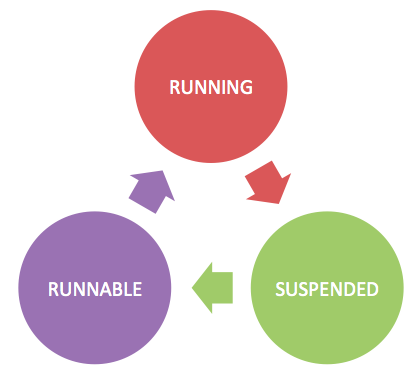

As I have already pointed it out last week, it doesn’t make sense to put a latch in front of every shared data structure, and synchronize the access to the data structure across multiple threads with the latch. A latch has a huge overhead associated with it: when you can’t acquire a latch (because someone else has already an incompatible latch acquired), the query is forced to wait, and enters the SUSPENDED state. The query waits in the SUSPENDED state until the latch can be acquired, and afterwards moves on into the RUNNABLE state. The query remains in the RUNNABLE state as long as no CPU is available for query execution. As soon as the CPU is free, the query moves again into the RUNNING state and can finally access the shared data structure which is protected with the latch, which was successfully acquired. The following picture shows the state machine that SQLOS implements for the cooperative thread scheduling.

Because of the associated overhead of latches, it doesn’t make sense to protect “busy” data structures with a latch. For that reason SQL Server also implements so-called Spinlocks. A spinlock is like a latch a lightweight synchronization object used by the storage engine to synchronize thread access to shared data structures. The main difference to a latch is that you actively wait for the spinlock – without leaving the CPU. A “wait” on a spinlock always happens on the CPU, in the RUNNING state. You spin in a tight loop until the spinlock is acquired. It’s a so-called busy wait. The main advantage of a spinlock is that no context switches are involved when a query must wait on a spinlock. On the other hand busy waiting wastes CPU cycles that other queries might be able to use more productively.

To avoid waisting too much CPU cycles, SQL Server 2008 R2 and higher implements a so-called exponential backoff mechanism, where the thread stops spinning after some time and sleeps on the CPU. The interval after which a thread goes to sleep increases over time between the attemps to acquire the spinlock. This behavior can reduce the impact on CPU performance.

Spinlocks & Troubleshooting

The main DMV for troubleshooting spinlocks is sys.dm_os_spinlock_stats. Every row that is returned by that DMV represents one specific spinlock in SQL Server. SQL Server 2014 implements 262 different spinlocks. Let’s have a more detailed look at the various columns in this DMV.

- name: The name of the spinlock

- collisions: The number of times that threads were blocked by a spinlock when trying to access a protected data structure

- spins: The number of times threads spinned in a loop trying to obtain the spinlock

- spins_per_collision: Ratio between spins and collisions

- sleep_time: The time that threads were sleeping because of a backoff

- backoffs: The number of times that threads were backed-off to allow other threads to continue on the CPU

The most important column in this DMV is backoffs, because this column tells you how often a backoff event occurred for a specific spinlock type. And very high backoffs yield to high CPU consumption and a so-called Spinlock Contention in SQL Server. I have already seen SQL Server installations where 32 cores were running at 100% without performing any work – a typical symptom for spinlock contention.

To troubleshoot a spinlock contention problem in more detail you can use the XEvent sqlos.spinlock_backoff provided by Extended Events. This event is always raised when a backoff occurs. If you capture this event, you also have to make sure that you use a very good selective predicate, because backoffs will always occur in SQL Server. A good predicate can be a specific spinlock type, where you have already seen high backoffs through the above mentioned DMV. The following code sample shows how you can create such an XEvent session.

-- Retrieve the type value for the LOCK_HASH spinlock.

-- That value is used by the next XEvent session

SELECT * FROM sys.dm_xe_map_values

WHERE name = 'spinlock_types'

AND map_value = 'LOCK_HASH'

GO

-- Tracks the spinlock_backoff event

CREATE EVENT SESSION SpinlockContention ON SERVER

ADD EVENT sqlos.spinlock_backoff

(

ACTION

(

package0.callstack

)

WHERE

(

[type] = 129 -- <<< Value from the previous query

)

)

ADD TARGET package0.histogram

(

SET source = 'package0.callstack', source_type = 1

)

GO

As you can see from the listing, I use here the histogram target to bucketize on the callstack. Therefore you can see which code path within SQL Server generated the highest backoffs for the specific spinlock type. You can even symbolize the call stack by enabling trace flag 3656. As a prerequisite you need to install the public symbols of SQL Server. Paul Randal (Blog, Twitter) has written a blog posting about it. Here you can see an output from this XEvent session.

sqldk.dll!XeSosPkg::spinlock_backoff::Publish+0x138

sqldk.dll!SpinlockBase::Sleep+0xc5

sqlmin.dll!Spinlock<129,7,1>::SpinToAcquireWithExponentialBackoff+0x169

sqlmin.dll!lck_lockInternal+0x841

sqlmin.dll!XactWorkspaceImp::GetSharedDBLockFromLockManager+0x18d

sqlmin.dll!XactWorkspaceImp::GetDBLockLocal+0x15b

sqlmin.dll!XactWorkspaceImp::GetDBLock+0x5a

sqlmin.dll!lockdb+0x4a sqlmin.dll!DBMgr::OpenDB+0x1ec

sqlmin.dll!sqlusedb+0xeb

sqllang.dll!usedb+0xb3

sqllang.dll!LoginUseDbHelper::UseByMDDatabaseId+0x93

sqllang.dll!LoginUseDbHelper::FDetermineSessionDb+0x3e1

sqllang.dll!FRedoLoginImpl+0xa1b

sqllang.dll!FRedoLogin+0x1c1

sqllang.dll!process_request+0x3ec

sqllang.dll!process_commands+0x4a3

sqldk.dll!SOS_Task::Param::Execute+0x21e

sqldk.dll!SOS_Scheduler::RunTask+0xa8

sqldk.dll!SOS_Scheduler::ProcessTasks+0x279

sqldk.dll!SchedulerManager::WorkerEntryPoint+0x24c

sqldk.dll!SystemThread::RunWorker+0x8f

sqldk.dll!SystemThreadDispatcher::ProcessWorker+0x3ab

sqldk.dll!SchedulerManager::ThreadEntryPoint+0x226

With the provided call stack, it is not that hard to identify in which area of SQL Server the spinlock contention occurred. In that specific call stack the contention occurred in the LOCK_HASH spinlock type that protects the hashtable of the lock manager. Every time when a lock or unlock operation in the lock manager is executed, a spinlock must be acquired on the corresponding hash bucket. As you can also see from the call stack the spinlock was acquired when calling the function GetSharedDBLockFromLockManager from the class XactWorkspaceImp. It’s an indication that a shared database lock was tried to be acquired, when connecting to a database. And this finally yielded to a spinlock contention in the LOCK_HASH spinlock with very high backoffs.

If you attend my talk Latches, Spinlocks, and Lock Free Data Structures at SQLbits (Telford, UK) in 2 weeks or at the SQLPASS Summit in Seattle in November, I will also show you how you can reproduce this spinlock contention, how to troubleshoot it, and finally how you can resolve it.

Summary

In this blog posting you have learned more about spinlocks in SQL Server. In the first part we have discussed why SQL Server needs to implement spinlocks. As you have seen, with spinlocks it’s just cheaper to protect a “busy” shared data structure from concurrent thread access – like the lock manager. And in the second section we had a more detailed look on how you can troubleshoot spinlock contention in SQL Server, and how you can identify with a symbolized call stack the root cause of the problem.

Thanks for reading!

-Klaus

4 thoughts on “Introduction to Spinlocks in SQL Server”

Pretty much interested in Query Tuning and Performance Tuning and Troubleshooting workshops.

Due to various reasons , many of the audience couldnt be able to make through long way to attend the sessions .

Considering the interests of wide number of audince global wide , it is really appreciable if there is an option for offline content / course for the same workshops , so that everyone can go through it at reasonable price. Just my two cents and humble request.

Hello,

Thanks for your feedback.

Currently I only provide my trainings as offline trainings face-to-face.

There are currently no plans to deliver these trainings online.

Thanks,

-Klaus

good information regarding spin locks

Hi Klaus,

thanks for the interesting post. We are actually also facing the spinlock contention (SOS_OBJECT_STORE). Here is the callstack:

sqldk.dll!XeSosPkg::spinlock_backoff::Publish+0x138

sqldk.dll!SpinlockBase::Sleep+0xc5

sqlmin.dll!Spinlock<250,1,1>::SpinToAcquireWithExponentialBackoff+0x169

sqlmin.dll!LockManager::GetLockBlocks+0x76

sqlmin.dll!lck_lockInternal+0x310

sqlmin.dll!GetLock+0x1ea

sqlmin.dll!BTreeRow::AcquireLock+0x210

sqlmin.dll!IndexRowScanner::AcquireNextRowLock+0xb1

sqlmin.dll!IndexDataSetSession::GetNextRowValuesInternal+0x1265

sqlmin.dll!RowsetNewSS::FetchNextRow+0x197

sqlmin.dll!CQScanRowsetNew::GetRowWithPrefetch+0x51

sqlmin.dll!CQScanRangeNew::GetRow+0x15b

sqlmin.dll!CQScanNLJoinTrivialNew::GetRow+0x196

sqlmin.dll!CQScanBatchSortNew::BuildSortTable+0x166

sqlmin.dll!CQScanBatchSortNew::GetRowHelper+0x4d7

sqlmin.dll!CQScanRangePrefetchDelayNew::GetRow+0xd6

sqlmin.dll!CQScanNLJoinTrivialNew::GetRow+0xe5

sqlmin.dll!CQScanProjectNew::GetRow+0x25

sqlmin.dll!CQueryScan::GetRow+0x81

sqllang.dll!CXStmtQuery::ErsqExecuteQuery+0x54a

sqllang.dll!CXStmtSelect::XretExecute+0x2e7

sqllang.dll!CMsqlExecContext::ExecuteStmts<1,0>+0x1782

sqllang.dll!CMsqlExecContext::FExecute+0xa3a

sqllang.dll!CSQLSource::Execute+0x86c

Do you have any advice for me how to get rid off this spinlock? We already revised transaction isolation levels. Can partitioning help us?

Thank you in advance for your answer.

Miloslav.