In this blog posting I want to talk a little bit in more detail why you should avoid Auto Growth operations on the Transaction Log. In my various consulting engagements I see a lot of SQL Server databases, that are running with the default file configuration for the initial size and Auto Growth settings for the Transaction Log. People are sometimes just relying on the Auto Growth mechanism, because it *just* works. Of course, it just works, but you are paying a lot for it, as you will see very shortly.

Just relying on the Auto Growth mechanism of the Transaction Log is always a bad idea. First of all it leads to a serious Log Fragmentation, which will have a negative impact on the time it takes to to run Crash Recovery on your database during a SQL Server startup, or during a Cluster Failover. And in addition, your write transactions in your database will have to wait, as soon as the Transaction Log triggers the Auto Growth mechanism.

When the Auto Growth mechanism of the Transaction Log kicks in, SQL Server *always* has to zero-initialize the new chunk that is added to the end of the file. It doesn’t matter if your SQL Server instance is running with the privilege Instant File Initialization, or not – the Transaction Log is *always* zero initialized. The reason on that is very obvious: when SQL Server has done a wrap-around of the Transaction Log in the past, Crash Recovery has to know where to stop. And the stop mark is just there, where Crash Recovery finds zeros instead of valid Transaction Log Records.

The problem with the zero initialization is that it can take a long time (depending on your Auto Growth rate, and the speed of your storage). And during that time no other transaction can write Transaction Log Records into the Transaction Log. They are just blocked by a latch that is taken on the Transaction Log Manager. So your write transactions are just put into the suspended state (until they can acquire the necessary latch), and they are waiting, and waiting, and waiting, until the Auto Growth of your Transaction Log is completed. Let’s demonstrate this behavior with a simple example.

In the first step I’m creating for this demonstration a new database. But I’m not using here the default settings for the database, because I’m specifying an Auto Growth factor of 10 GB for the Transaction Log. This is a really, really bad advice, but I just want to show you the side effects of this setting. Please never ever use this bad configuration in your production database!!!

-- Create a new database with 10 GB Auto Growth for the Transaction Log CREATE DATABASE AutoGrowthTransactionLog ON PRIMARY ( NAME = N'AutoGrowthTransactionLog', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL11.MSSQLSERVER\MSSQL\DATA\AutoGrowthTransactionLog.mdf', SIZE = 5120KB, FILEGROWTH = 1024KB ) LOG ON ( NAME = N'AutoGrowthTransactionLog_log', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL11.MSSQLSERVER\MSSQL\DATA\AutoGrowthTransactionLog_log.ldf', SIZE = 1024KB, FILEGROWTH = 10240000KB -- 10 GB Auto Growth! ) GO

In the next step I’m creating two 2 tables in the database. With the 1st table I’m just filling up my Transaction Log very quickly by inserting some records. And during the Auto Growth phase of the Transaction Log we will try to insert new records into the 2nd table to prove that this transaction will be blocked by the Auto Growth mechanism.

-- Create a new table, every records needs a page of 8kb CREATE TABLE Chunk ( Col1 INT IDENTITY PRIMARY KEY, Col2 CHAR(8000) ) GO -- Another simple table CREATE TABLE Foo ( Bar INT NOT NULL ) GO

By now we have created all our necessary database objects, so I’m filling up now the Transaction Log by starting a new transaction without committing it immediately:

-- Begin a new transaction, that blocks the 1st VLF in the Transaction Log

BEGIN TRANSACTION

INSERT INTO Chunk VALUES (REPLICATE('x', 8000))

GO

Because we have now an ongoing, uncommitted transaction, SQL Server can’t reuse that part of the Transaction Log, where the Transaction Log Records of this transaction are stored. They are just needed for a possible rollback. So I’m filling now up the Transaction Log by inserting 66 other records in a different session:

INSERT INTO AutoGrowthTransactionLog.dbo.Chunk VALUES (REPLICATE('x', 8000))

GO 66

-- Commit the ongoing transaction from the different session

-- Execute this code in the 1st session

COMMIT

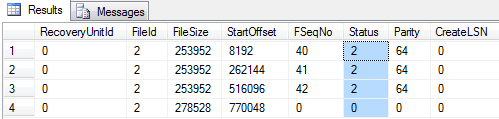

And finally we are committing our first transaction. This means now we have a Transaction Log in front of us, that is almost full. We can prove that fact through DBCC LOGINFO:

When we now want to insert a new record into that table, the Transaction Log has no space available anymore, and SQL Server kicks in the Auto Growth of the Transaction Log.

-- This statement will trigger the Auto Growth mechanism!

INSERT INTO Chunk VALUES (REPLICATE('x', 8000))

GO

To monitor what happens in the mean time during the Auto Growth, you can open a different session window in SQL Server Management Studio, and try to insert another record into our 2nd table – the table Foo:

-- This statement is now blocked by the Auto Growth mechanism. INSERT INTO Foo VALUES (1) GO

This SQL Statement will just block, because the transaction will have to write Transaction Log Records into the Transaction Log, which is currently not available. To further analyze this blocking situation, you can open a 3rd session window, and execute the following 2 SQL statements:

-- Analyze the blocking situation SELECT wait_type, * FROM sys.dm_exec_requests WHERE session_id IN (52, 54) SELECT wait_type, * FROM sys.dm_os_waiting_tasks WHERE session_id IN (52, 54) GO

As you can see from the listing, I’m just looking into the DMVs sys.dm_exec_requests and sys.dm_os_waiting_tasks for both sessions – the session that triggered the Auto Growth, and the session that is currently blocked by the Auto Growth mechanism. The session that triggered Auto Growth reports a so-called Preemptive Wait Type – the wait type PREEMPTIVE_OS_WRITEFILEGATHER in our case. A preemptive wait type is a wait type that is returned by SQL Server, when SQL Server executes a Win32 API function that is outside of its scheduling mechanism. In our case the Auto Growth is done through the Win32 API function WriteFileGather (see http://msdn.microsoft.com/en-us/library/windows/desktop/aa365749(v=vs.85).aspx for further information about it).

And the INSERT statement that tries to insert a new record into the table Foo reports the wait type LATCH_EX. As you can see from the column resource_description from the DMV sys.dm_os_waiting_tasks that latch has to be acquired on the Log Manager of SQL Server. You can also cross-check that assumption by querying the DMV sys.dm_os_latch_stats and restrict on the latch class LOG_MANAGER. As you will see you will have some waits on that specific latch. That latch was acquired by the transaction that triggered the Auto Growth of the Transaction Log, and every other writing transaction is blocked as long as this latch can’t be acquired. So when you have high waiting times on that latch on your systems, this will be also an indication that you are currently dealing with Auto Growth problems in your Transaction Log.

I hope that I have convinced you with this blog posting, that relying on the Auto Growth mechanism of the Transaction Log is not always the best solution. As you have seen with this very simple example, every writing transaction is blocked by an Auto Growth operation in your database, and this will definitely hurt the throughput and the scalability of your database. To make sure that you have a well-performing Transaction Log, you can also use the best practices that Kimberly Tripp has published on that topic.

Call to action: please feel free to leave a comment, with which settings you are running your various Transaction Logs in production.

Thanks for reading

-Klaus